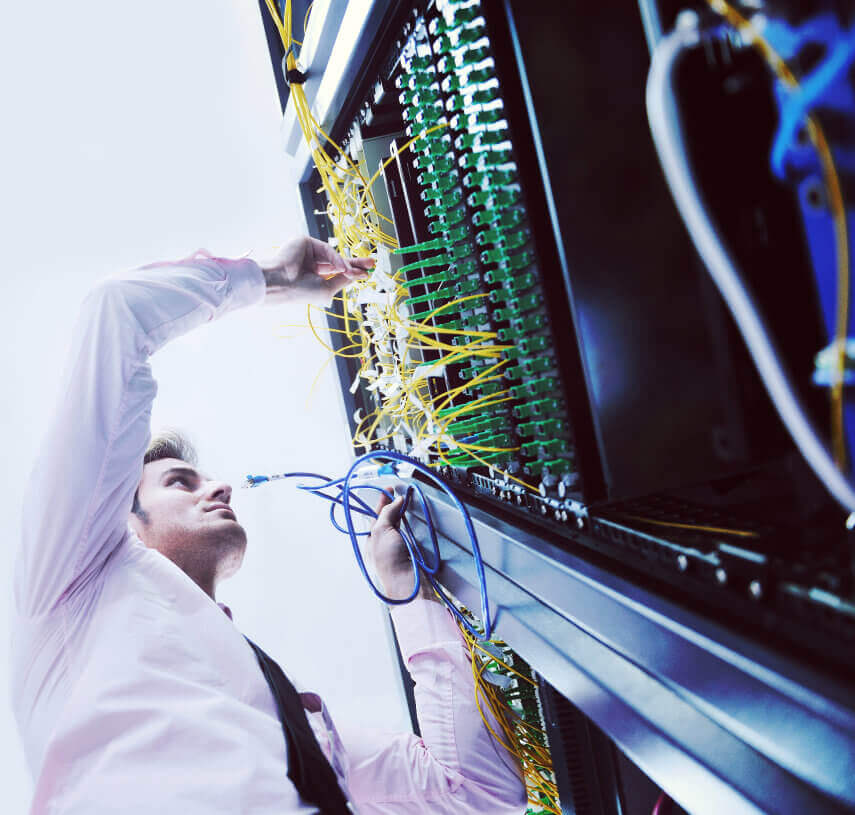

Understanding your business requirements helps us to build the best hosting and cyber security, tailored to fit your needs at the best price possible

Hosting Solutions

With three hosting packages ready to start, we have an option for every business.

We help customers become modern, technology-centric organisations built on best practice, secure server infrastructure and reliable secure hosting service delivery.

Save 40% on Cloud Costs

Free Cloud Cost Assessment, powered by CloudHealth will save up to 40% on costs.

With Over 20 Years’ Experience

Partner with us and benefit from our extensive expertise to guide you to the right solution.

- ISO:27001-Certified

- Cyber Essentials Plus-Certified

- Crown Commercial Services Supplier

- Investors In People

Speak to one of our experts and find the best practice solution for you

020 3745 7706

Trusted by teams from world-leading organisations

Services & solutions tailored to your sector

Over the last 20 years, we have worked across a wide range of sectors, both public and private. Chat to us today to learn more about our experience in your field.

Discover how we’ve helped others

View all SectorsAccreditors & Partners

Latest Insights

Read the latest news, research and expert views from our master Craftsmen on cyber security and hosting issues, cyber risk, threat intelligence, network security, incident response and cyber strategy.